The Google Adplanner service allows advertisers more information on popular websites including demographic and traffic information. Now whilst I don’t want to advertise on any of these sites we can still use the information to compare the portals and major brands in our industry and the results throw up some predictable and not so predictable results at the same time.

This article will feature a focus on Portals but the followup article will focus on the major real estate groups.

Real Estate Portals

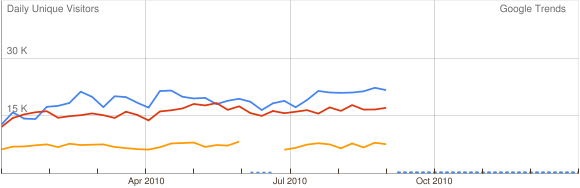

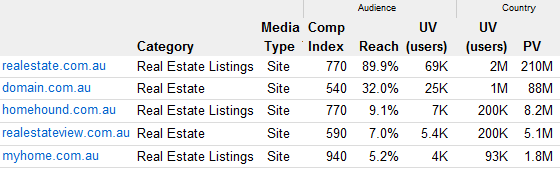

In Australia there really is three levels of portals based upon traffic levels. Tier 1 includes Realestate.com.au and Domain.com.au. Tier 2 includes Realestateview.com.au, Homehound.com.au and Myhome.com.au. The last tier covers everybody else.

Traffic

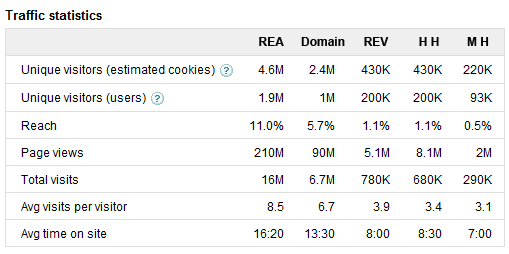

When you start looking at the results of the top two portals against the rest a few things really stands out . Both Domain and Realestate.com.au are far superior across all metrics but of particular note is the pages per visit and time on site statistics.

The clearest way to demonstrate this difference is by comparing the time visitors spend on each site. This really ignores traffic counts and looks at how each site engages the visitor and keeps them on the site. Generally the longer a session time the better. Visitors to Realestate.com.au spend nearly 16:20 on the site every visit in comparison to the industry sponsored Realestateview which can only manage to hang on to visitors for 7:50 .

Another metric that really shows the difference between the top two tiers is the number of visits each visitor has to the site. With Realestate.com.au the average visitors returns 8.5 times against Realestateview.com.au just under 4 times. This shows the effectiveness of the email alerts, property brochures and the general desire for visitors to return to continue their property search.

Google’s statistics also confirms that the Property Seeker stats trotted out by RealEstate.com.au are totally exaggerated but we all knew that didn’t we?. We have already discussed this a number of times but Google stats show the relationship between real people and unique visitors based on cookies is about 4:10 which means their claims of reaching as many as 6 million “Property Seekers is out by nearly 4 million. I guess if you are going to exaggerate you might as well do it with some gusto.

Demographics

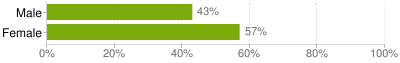

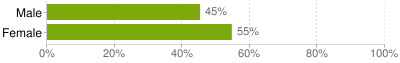

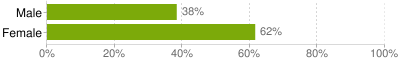

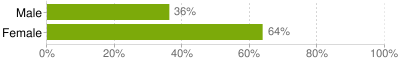

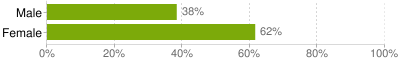

The last thing that really stands out between the top two tiers is their audience demographic. All portals have a predominant female audience but both Realestate.com.au and Domain.com.au attract significantly higher levels (both at 43%) of males as a percentage of total visitors whereas the second tier all have just 38% of all visitor males.

Realestate.com.au

Domain.com.au

RealestateView.com.au

Homehound.com.au

Myhome.com.au

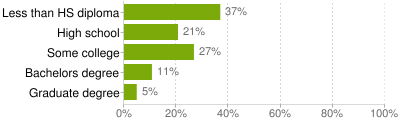

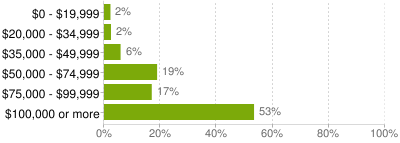

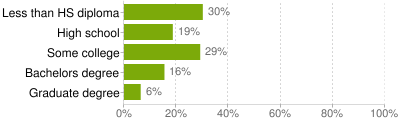

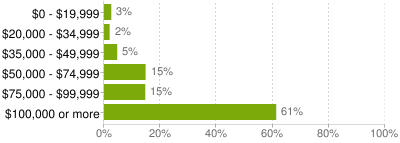

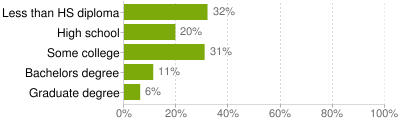

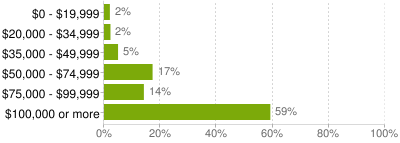

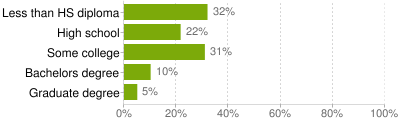

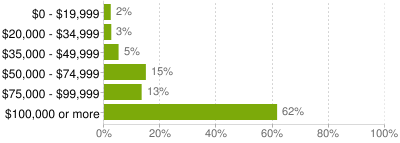

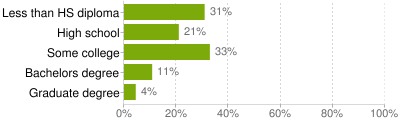

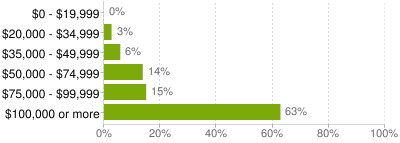

Despite their similarities there are still significant differences between realestate.com.au and domain. Domain.com.au’s audience is not only better educated but also has significantly higher household income.

Realestate.com.au

Domain.com.au

RealestateView.com.au

Homehound.com.au

Myhome.com.au

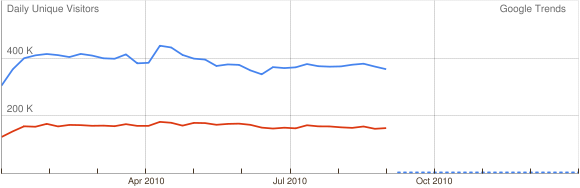

Despite keeping visitors longer and having them return more often the trend for 2010 is not a great one for the Tier 1 portals. Realestateview.com.au , Homehound.com.au and Myhome.com.au have all made significant increases in their traffic over in 2010 whilst Domain has stayed fairly stable and Realestate.com.au has dropped back significantly.

This loss in traffic started with the new site but has since stabilised. Overall though REA has dropped more monthly traffic from their peak in 2010 than all the Tier 2 Portals combined. Despite significant gains by the tier 2 portals percentage wise realestate.com.au is that far out in front that the gap seems hardly dented. The real damage will be caused if the trend continues and if it does it will make some people very nervous at REA as they have nothing to gain and everything to lose.

Tier 1

Market Reach

At the top of the article the total reach against all Australian internet users for each site was looked at. When you filter the audience down to those who are looking for real estate on the net the market reach becomes far more impressive.

What is incredible here is that homehound and realestateview.com.au are really closing in on Domain as far as market reach is concerned. With realestateview.com.au recently purchasing myhome.com.au they can start putting pressure on myhome if the can add their traffic to the mix and not lose it. Realestateview.com.au will also need to find out what they are doing wrong with session times, make their site more sticky and increase visitor loyalty.

So no matter what metric you use Realestate.com.au is by far the number 1 portal in Australia. It gets more traffic, more repeat business and is more engaging than any of its competition. So why they try and fake their Property Seeker stats is beyond me.

(edit: Had to fix up the Domain Education graph as it was pointing to the REA one)

13 Comments

Simon Baker

Glenn

Great article and clearly articulates the gap and challenges that the tier 2 players face.

What is interesting when looking at the stats quoted by realestate.com.au is tha they are really quoting the Unique Browser stats. However this is becoming an increasingly irrelevant measure as users look at the sites using more and more devices.

For example if i look at realestate.com.au on my laptop, my iphone and my ipad, i will be at least 3 unqiue browers.

Therefore as these devices become more prevelent, it is almost impossible for a market leading site to go backwards in unique browsers

The real measure that should be looked at is total time on site … not the session duration but the total minutes spent on the site by all users. This cuts through the marketing waffle and tells us the true engagement people are having with the site.

Thanks

Simon Baker

CEO

Classified Ad Ventures

Nick

Keep in mind that these stats are good guesses but not much more. :p

Google uses a indirect form of measurement so it is all extrapolation. Comparing numbers between portals should be ok, but the numbers themselves can be quite off.

“What is interesting when looking at the stats quoted by realestate.com.au is tha they are really quoting the Unique Browser stats”

Actually dont they fudge their numbers by counting unique browsers per day and add up each day for a monthly total? So if you visited yesterday and came back from the same computer today, you’d count as two.

Glenn Batten

Nick,

Google’s statistics are far more than good guesses. They have multiple data sources that they combine to bring these statistics together

Google Search

Google Web History

Google Toolbar

Google Chrome

Google Anlytics

Google Adsense

Google Doubleclick

etc etc

This is as independent as it gets..Because of google accounts and google toolbar data they can track user behaviour across multiple browsers which is something cookie based measurement cannot do.

If a user deletes his cookies, the google toolbar still identifies them as the same person no matter if they login on their phone, their ipad or their pc. .

Naturally there is extrapolation involved but with most statistics in everyday life there is as well….even off the web such as TV ratings and policital polls.

Where it loses accuracy is when the total visitor numbers get too low. Once the potential for error become too large they dont show anything. “In addition, DoubleClick Ad Planner only shows results for sites that receive a significant amount of traffic, and enforces minimum thresholds for inclusion in the tool.” For most of these sites its not really an issue but for some of the groups in the next article we cant get visitor numbers because their traffic is too low for accurate measurement.

I have enough faith in Google mathematicians, statisticians and programmers that this data is far beyond mere guesses…

Glenn Batten

Nick,

Besides, how could you call optin google analytics, google accounts, and toolbar traffic as indirect? Those features of googles data collection are far more accurate than relying on cookies.

Peter Carabot

G’day, Great to finally see a collection of data for the 4 majors, I would not put too much faith in Avg Time on Site especially with REA, maybe we live in an REA Black spot but REV loads much faster and the pages are served very very fast. Waiting for REA to serve pages it’s like watching grass grow….

I suppose the time it takes is counted as time on site…….

I also agree Simon Baker comment as to the unique browsers count…..

Thank you again

Peter Carabot

Mareeba First National RE

Bill burdin

Is there a metric to measure comparisons by region, for example in the ACT AllHomes is dominant attracting 90% of property seekers from the ACT region to their portal. I would guess that on the NSW east coast not including Sydney that Realestworld would be fairly dominant.

Greg Vincent

Great post Glenn. I’m not sure if you are aware but it appears that REIWA.com.au gets more UV’s than MyHome.com.au?

There’s 240K UV’s (estimated cookies) and 110K UV’s (users) from REIWA.com.au https://www.google.com/adplanner/planning/site_profile#siteDetails?identifier=reiwa.com.au&lp=true.

Nick

Glenn they do not correlate some of the data points you mentioned such as web histories and they only use Google Analytics data if the site has expressly given them permission which most dont.

For example, for realestate.com.au they only use these as datapoints: Google Search, Google Toolbar (only if the extended features setting is turned on) and Google Doubleclick.

RE.com.au doesnt publish their analytics data, Google Chrome doesnt tell your browsing habits in any way, people use other search engines, dont have the toolbar installed and Doubleclick must be the the most blocked ad provider.

Its good guess but its still a guess and there is no direct measuring.

Glenn Batten

Nick,

There is a huge difference between a guess and an estimate and I think you are confusing the two.

There is no overwhelming posts on the web about how inaccurate it was. There was questions raised about how accurate it will be when it launched, but since then all is quiet.

All google analytics data is used in adplanner stats but anybody who has not opted in remains anonymous and is only used at the “macro” level.

Care to share how would you know if REA opts in or not?

You said that there were only three data sources for the realestate.com.au statistics but that is just not true..

You said “Google Search, Google Toolbar (only if the extended features setting is turned on) and Google Doubleclick.”

Why do you think it is just these three. In their data sources they list other third-party market research. That is not in there for the fun of it.

The state that Unique Visitors and Market Reach does not come from any analytics data unless fully opted in… and that it comes come from other data sources. They dont state which one so how do you know where it si from and that it is just a guess ?

Either REA opt in and that is another data source to your three (accurate Google Analytics data)… or they dont opt in, its still data is still added in but only a macro level and another third party data source is used again in addition to your three. either way that makes one more data source at least.

Google openly declare that Demographics comes from another third party provider but they dont name which one but state that “The third-party demographic data is licensed from an industry-accepted consumer research panel operated according to industry best practices by a full-service research firm.” So thats at least one more data source you did not count on.

How do you know they are wrong and are just guessing?

You must know the companies involved in creating both of those metrics for Google or else are you just guessing ??

Because Google have optin data they can use this to compare directly against these third party data sources so as to make the information they have on any sites that does not opt in more accurate.

Google Adplanner has apparently just been reviewed by the Media Review Council for accreditation. Google already has many of their components (adwords, doubleclick etc) approved. According to the MRC website Neilsens Netratings are currently under review for accreditation.

But maybe they are fooling everybody and are just guessing all along 🙂

Glenn Batten

Google have also confirmed that they “extrapolate website traffic from sample data we collect from a variety of sources.” To me this part of the equation appears to be seems similar to the Hitwise method.

Ross Fazel

A timely missive Glenn given the Internet Advertising Bureau’s current deliverations on tenders for internet audience measurement – more info here http://www.iabaustralia.com.au/index.php?/news/press/iab_australia_announces_tender_for_online_audience_measurement_services

Nick

“Care to share how would you know if REA opts in or not?”

Because it has a little icon next to each stat that uses opt in Analytics data. If its not there then Analytics isnt being used.

“In their data sources they list other third-party market research. ”

Perhaps, but my point is the numbers arent direct measurements. Unless you get the website logs and/or place code on the site, its not a direct measurement.

They get trends and other data from various sources and extrapolate it over the whole net population. Its a educated guess but its still a guess.

Only RealEstate.com.au (for example) has the server logs which tell the real story, and I doubt they’d give them out to anyone let alone their parent company’s arch nemesis.

Glenn Batten

Nick,

What server logs provide demographic data for this “real story” you are talking about?? In fact what publicly available demographic data for any website is not extrapolated from a sample??

Educated or not, these are not guesses, they are estimates and they have corresponding error rates using what is about to be (assuming they get approved) industry recognised and approved procedures and methadolgoies.. Google because of their access to their own data sources and third party data can reduce the error rate by having many data points cross referenced… and they are certainly far more than three you nominated.

Even using server logs have error rates because you cant identify unique users and you certainly cannot identify demographic information.

Cookie/javascript based analytics also have their own problems discussed on here many times.

I take it you also consider hitwise results as purely a guess as well?

You stick in the guess camp… and I will happily sit in the estimate camp I think 🙂